## Background

In #6380 we fixed a privilege escalation bug that allowed members of a

project that had permission to add users to the project with roles that

had a higher permission set than themselves. The PR linked essentially

constricts you only be able to assign users to roles that you possess

yourself if you are not an Admin or Project owner.

This fix broke expectations for another customer who needed to have a

project owner without the DELETE_PROJECT permission. The fix above made

it so that their custom project owner role only was able to assign users

to the project with the role that they posessed.

## Fix

Instead of looking directly at which role the role granter has, this PR

addresses the issue by making the assessment based on the permission

sets of the user and the roles to be granted. If the granter has all the

permissions of the role being granted, the granter is permitted to

assign the role.

## Other considerations

The endpoint to get roles was changed in this PR. It previously only

retrieved the roles that the user had in the project. This no-longer

makes sense because the user should be able to see other project roles

than the one they themselves hold when assigning users to the project.

The drawback of returning all project roles is that there may be a

project role in the list that the user does not have access to assign,

because they do not hold all the permissions required of the role. This

was discussed internally and we decided that it's an acceptable

trade-off for now because the complexities of returning a role list

based on comparing permissions set is not trivial. We would have to

retrieve each project role with permissions from the database, and run

the same in-memory check against the users permission to determine which

roles to return from this endpoint. Instead we opted for returning all

project roles and display an error if you try to assign a role that you

do not have access to.

## Follow up

When this is merged, there's no longer need for the frontend logic that

filters out roles in the role assignment form. I deliberately left this

out of the scope for this PR because I couldn't wrap my head around

everything that was going on there and I thought it was better to pair

on this with @chriswk or @nunogois in order to make sure we get this

right as the logic for this filtering seemed quite complex and was

touching multiple different components.

---------

Co-authored-by: Fredrik Strand Oseberg <fredrikstrandoseberg@Fredrik-sin-MacBook-Pro.local>

This appears to have been an oversight in the original implementation

of this endpoint. This seems to be the primary point of this

permission. Additionally, the docs mention that this permission should

allow you to do just that.

Note: I've not added any tests for this, because we don't typically add

tests for it. If we have an example to follow, I'd be very happy to add

it, though

https://linear.app/unleash/issue/2-2592/updateimprove-a-segment-via-api-call

Related to https://github.com/Unleash/unleash/issues/7987

This does not make the endpoint necessarily better - It's still a PUT

that acts as a PUT in some ways (expects specific required fields to be

present, resets the project to `null` if it's not included in the body)

and a PATCH in others (ignores most fields if they're not included in

the body). We need to have a more in-depth discussion about developing

long-term strategies for our API and respective OpenAPI spec.

However this at least includes the proper schema for the request body,

which is slightly better than before.

Previously we expected the tag to look like `type:value`. Now we allow

everything after first colon, as the value and not break query

`type:this:still:is:value`.

Updates the instance stats endpoint with

- maxEnvironmentStrategies

- maxConstraints

- maxConstraintValues

It adds the following rows to the front end table:

- segments (already in the payload, just not used for the table before)

- API tokens (separate rows for type, + one for total) (also existed

before, but wasn't listed)

- Highest number of strategies used for a single flag in a single

environment

- Highest number of constraints used on a single strategy

- Highest number of values used for a single constraint

This PR fixes an issue where the number of flags belonging to a project

was wrong in the new getProjectsForAdminUi.

The cause was that we now join with the events table to get the most

"lastUpdatedAt" data. This meant that we got multiple rows for each

flag, so we counted the same flag multiple times. The fix was to use a

"distinct".

Additionally, I used this as an excuse to write some more tests that I'd

been thinking about. And in doing so also uncovered another bug that

would only ever surface in verrry rare conditions: if a flag had been

created in project A, but moved to project B AND the

feature-project-change event hadn't fired correctly, project B's last

updated could show data from that feature in project A.

I've also taken the liberty of doing a little bit of cleanup.

## About the changes

When storing last seen metrics we no longer validate at insert time that

the feature exists. Instead, there's a job cleaning up on a regular

interval.

Metrics for features with more than 255 characters, makes the whole

batch to fail, resulting in metrics being lost.

This PR helps mitigate the issue while also logs long name feature names

Implements empty responses for the fake project read model. Instead of

throwing a not implemented error, we'll return empty results.

This makes some of the tests in enterprise pass.

This PR touches up a few small things in the project read model.

Fixes:

Use the right method name in the query/method timer for

`getProjectsForAdminUi`. I'd forgotten to change the timer name from the

original method name.

Spells the method name correctly for the `getMembersCount` timer (it

used to be `getMemberCount`, but the method is callled `getMembersCount`

with a plural s).

Changes:

Call the `getMembersCount` timer from within the `getMembersCount`

method itself. Instead of setting that timer up from two different

places, we can call it in the method we're timing. This wasn't a problem

previously, because the method was only called from a single place.

Assuming we always wanna time that query, it makes more sense to put the

timing in the actual method.

Hooks up the new project read model and updates the existing project

service to use it instead when the flag is on.

In doing:

- creates a composition root for the read model

- includes it in IUnleashStores

- updates some existing methods to accept either the old or the new

model

- updates the OpenAPI schema to deprecate the old properties

Creates a new project read model exposing data to be used for the UI and

for the insights module.

The model contains two public methods, both based on the project store's

`getProjectsWithCounts`:

- `getProjectsForAdminUi`

- `getProjectsForInsights`

This mirrors the two places where the base query is actually in use

today and adapts the query to those two explicit cases.

The new `getProjectsForAdminUi` method also contains data for last flag

update and last flag metric reported, as required for the new projects

list screen.

Additionally the read model contains a private `getMembersCount` method,

which is also lifted from the project store. This method was only used

in the old `getProjectsWithCounts` method, so I have also removed the

method from the public interface.

This PR does *not* hook up the new read model to anything or delete any

existing uses of the old method.

## Why?

As mentioned in the background, this query is used in two places, both

to get data for the UI (directly or indirectly). This is consistent with

the principles laid out in our [ADR on read vs write

models](https://docs.getunleash.io/contributing/ADRs/back-end/write-model-vs-read-models).

There is an argument to be made, however, that the insights module uses

this as an **internal** read model, but the description of an internal

model ("Internal read models are typically narrowly focused on answering

one question and usually require simple queries compared to external

read models") does not apply here. It's closer to the description of

external read models: "View model will typically join data across a few

DB tables" for display in the UI.

## Discussion points

### What about properties on the schema that are now gone?

The `project-schema`, which is delivered to the UI through the

`getProjects` endpoint (and nowhere else, it seems), describes

properties that will no longer be sent to the front end, including

`defaultStickiness`, `avgTimeToProduction`, and more. Can we just stop

sending them or is that a breaking change?

The schema does not define them as required properties, so in theory,

not sending them isn't breaking any contracts. We can deprecate the

properties and just not populate them anymore.

At least that's my thought on it. I'm open to hearing other views.

### Can we add the properties in fewer lines of code?

Yes! The [first commit in this PR

(b7534bfa)](b7534bfa07)

adds the two new properties in 8 lines of code.

However, this comes at the cost of diluting the `getProjectsWithCounts`

method further by adding more properties that are not used by the

insights module. That said, that might be a worthwhile tradeoff.

## Background

_(More [details in internal slack

thread](https://unleash-internal.slack.com/archives/C046LV6HH6W/p1723716675436829))_

I noticed that the project store's `getProjectWithCounts` is used in

exactly two places:

1. In the project service method which maps directly to the project

controller (in both OSS and enterprise).

2. In the insights service in enterprise.

In the case of the controller, that’s the termination point. I’d guess

that when written, the store only served the purpose of showing data to

the UI.

In the event of the insights service, the data is mapped in

getProjectStats.

But I was a little surprised that they were sharing the same query, so I

decided to dig a little deeper to see what we’re actually using and what

we’re not (including the potential new columns). Here’s what I found.

Of the 14 already existing properties, insights use only 7 and the

project list UI uses only 10 (though the schema mentions all 14 (as far

as I could tell from scouring the code base)). Additionally, there’s two

properties that I couldn’t find any evidence of being used by either:

- default stickiness

- updatedAt (this is when the project was last updated; not its flags)

During adding privateProjectsChecker, I saw that events composition root

is not used almost at all.

Refactored code so we do not call new EventService anymore.

<!-- Thanks for creating a PR! To make it easier for reviewers and

everyone else to understand what your changes relate to, please add some

relevant content to the headings below. Feel free to ignore or delete

sections that you don't think are relevant. Thank you! ❤️ -->

## About the changes

When reading feature env strategies and there's no segments it returns

empty list of segments now. Previously it was undefined leading to

overly verbose change request diffs.

<img width="669" alt="Screenshot 2024-08-14 at 16 06 14"

src="https://github.com/user-attachments/assets/1ac6121b-1d6c-48c6-b4ce-3f26c53c6694">

### Important files

<!-- PRs can contain a lot of changes, but not all changes are equally

important. Where should a reviewer start looking to get an overview of

the changes? Are any files particularly important? -->

## Discussion points

<!-- Anything about the PR you'd like to discuss before it gets merged?

Got any questions or doubts? -->

For easy gitar integration, we need to have boolean in the event

payload.

We might rethink it when we add variants, but currently enabled with

variants is not used.

Changes the event search handling, so that searching by user uses the

user's ID, not the "createdBy" name in the event. This aligns better

with what the OpenAPI schema describes it.

Adds an endpoint to return all event creators.

An interesting point is that it does not return the user object, but

just created_by as a string. This is because we do not store user IDs

for events, as they are not strictly bound to a user object, but rather

a historical user with the name X.

Previously people were able to send random data to feature type. Now it

is validated.

Fixes https://github.com/Unleash/unleash/issues/7751

---------

Co-authored-by: Thomas Heartman <thomas@getunleash.io>

Changed the url of event search to search/events to align with

search/features. With that created a search controller to keep all

searches under there.

Added first test.

https://linear.app/unleash/issue/2-2469/keep-the-latest-event-for-each-integration-configuration

This makes it so we keep the latest event for each integration

configuration, along with the previous logic of keeping the latest 100

events of the last 2 hours.

This should be a cheap nice-to-have, since now we can always know what

the latest integration event looked like for each integration

configuration. This will tie-in nicely with the next task of making the

latest integration event state visible in the integration card.

Also improved the clarity of the auto-deletion explanation in the modal.

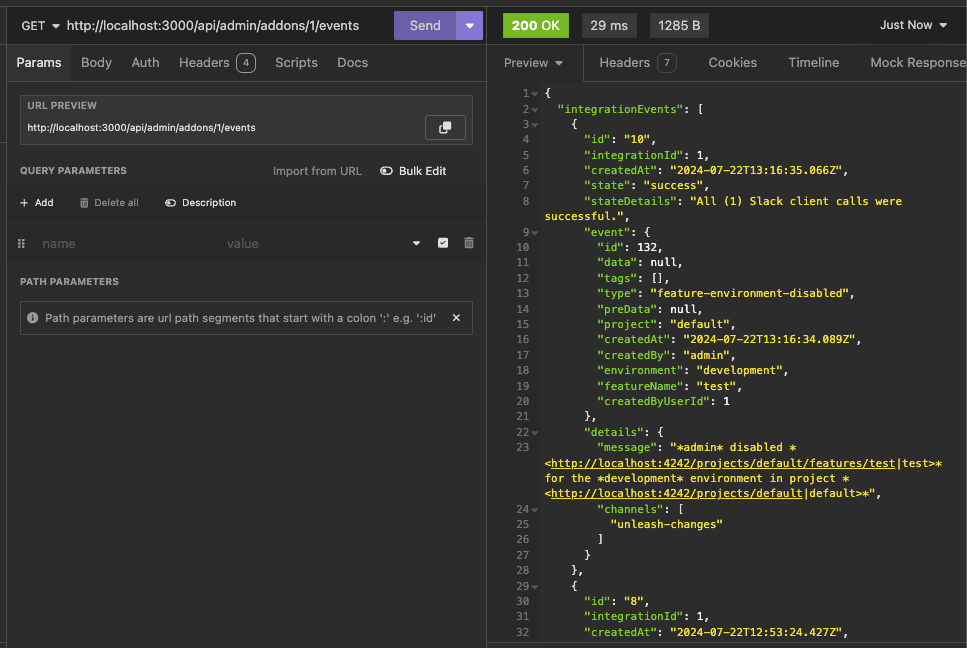

https://linear.app/unleash/issue/2-2439/create-new-integration-events-endpointhttps://linear.app/unleash/issue/2-2436/create-new-integration-event-openapi-schemas

This adds a new `/events` endpoint to the Addons API, allowing us to

fetch integration events for a specific integration configuration id.

Also includes:

- `IntegrationEventsSchema`: New schema to represent the response object

of the list of integration events;

- `yarn schema:update`: New `package.json` script to update the OpenAPI

spec file;

- `BasePaginationParameters`: This is copied from Enterprise. After

merging this we should be able to refactor Enterprise to use this one

instead of the one it has, so we don't repeat ourselves;

We're also now correctly representing the BIGSERIAL as BigInt (string +

pattern) in our OpenAPI schema. Otherwise our validation would complain,

since we're saying it's a number in the schema but in fact returning a

string.

This PR allows you to gradually lower constraint values, even if they're

above the limits.

It does, however, come with a few caveats because of how Unleash deals

with constraints:

Constraints are just json blobs. They have no IDs or other

distinguishing features. Because of this, we can't compare the current

and previous state of a specific constraint.

What we can do instead, is to allow you to lower the amount of

constraint values if and only if the number of constraints hasn't

changed. In this case, we assume that you also haven't reordered the

constraints (not possible from the UI today). That way, we can compare

constraint values between updated and existing constraints based on

their index in the constraint list.

It's not foolproof, but it's a workaround that you can use. There's a

few edge cases that pop up, but that I don't think it's worth trying to

cover:

Case: If you **both** have too many constraints **and** too many

constraint values

Result: You won't be allowed to lower the amount of constraints as long

as the amount of strategy values is still above the limit.

Workaround: First, lower the amount of constraint values until you're

under the limit and then lower constraints. OR, set the constraint you

want to delete to a constraint that is trivially true (e.g. `currentTime

> yesterday` ). That will essentially take that constraint out of the

equation, achieving the same end result.

Case: You re-order constraints and at least one of them has too many

values

Result: You won't be allowed to (except for in the edge case where the

one with too many values doesn't move or switches places with another

one with the exact same amount of values).

Workaround: We don't need one. The order of constraints has no effect on

the evaluation.

https://linear.app/unleash/issue/2-2450/register-integration-events-webhook

Registers integration events in the **Webhook** integration.

Even though this touches a lot of files, most of it is preparation for

the next steps. The only actual implementation of registering

integration events is in the **Webhook** integration. The rest will

follow on separate PRs.

Here's an example of how this looks like in the database table:

```json

{

"id": 7,

"integration_id": 2,

"created_at": "2024-07-18T18:11:11.376348+01:00",

"state": "failed",

"state_details": "Webhook request failed with status code: ECONNREFUSED",

"event": {

"id": 130,

"data": null,

"tags": [],

"type": "feature-environment-enabled",

"preData": null,

"project": "default",

"createdAt": "2024-07-18T17:11:10.821Z",

"createdBy": "admin",

"environment": "development",

"featureName": "test",

"createdByUserId": 1

},

"details": {

"url": "http://localhost:1337",

"body": "{ \"id\": 130, \"type\": \"feature-environment-enabled\", \"createdBy\": \"admin\", \"createdAt\": \"2024-07-18T17: 11: 10.821Z\", \"createdByUserId\": 1, \"data\": null, \"preData\": null, \"tags\": [], \"featureName\": \"test\", \"project\": \"default\", \"environment\": \"development\" }"

}

}

```

This PR updates the limit validation for constraint numbers on a single

strategy. In cases where you're already above the limit, it allows you

to still update the strategy as long as you don't add any **new**

constraints (that is: the number of constraints doesn't increase).

A discussion point: I've only tested this with unit tests of the method

directly. I haven't tested that the right parameters are passed in from

calling functions. The main reason being that that would involve

updating the fake strategy and feature stores to sync their flag lists

(or just checking that the thrown error isn't a limit exceeded error),

because right now the fake strategy store throws an error when it

doesn't find the flag I want to update.

https://linear.app/unleash/issue/2-2453/validate-patched-data-against-schema

This adds schema validation to patched data, fixing potential issues of

patching data to an invalid state.

This can be easily reproduced by patching a strategy constraints to be

an object (invalid), instead of an array (valid):

```sh

curl -X 'PATCH' \

'http://localhost:4242/api/admin/projects/default/features/test/environments/development/strategies/8cb3fec6-c40a-45f7-8be0-138c5aaa5263' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '[

{

"path": "/constraints",

"op": "replace",

"from": "/constraints",

"value": {}

}

]'

```

Unleash will accept this because there's no validation that the patched

data actually looks like a proper strategy, and we'll start seeing

Unleash errors due to the invalid state.

This PR adapts some of our existing logic in the way we handle

validation errors to support any dynamic object. This way we can perform

schema validation with any object and still get the benefits of our

existing validation error handling.

This PR also takes the liberty to expose the full instancePath as

propertyName, instead of only the path's last section. We believe this

has more upsides than downsides, especially now that we support the

validation of any type of object.

This PR adds prometheus metrics for when users attempt to exceed the

limits for a given resource.

The implementation sets up a second function exported from the

ExceedsLimitError file that records metrics and then throws the error.

This could also be a static method on the class, but I'm not sure that'd

be better.

PR #7519 introduced the pattern of using `createApiTokenService` instead

of newing it up. This usage was introduced in a concurrent PR (#7503),

so we're just cleaning up and making the usage consistent.

Deletes API tokens bound to specific projects when the last project they're mapped to is deleted.

---------

Co-authored-by: Tymoteusz Czech <2625371+Tymek@users.noreply.github.com>

Co-authored-by: Thomas Heartman <thomas@getunleash.io>

If you have SDK tokens scoped to projects that are deleted, you should

not get access to any flags with those.

---------

Co-authored-by: David Leek <david@getunleash.io>

This PR adds a feature flag limit to Unleash. It's set up to be

overridden in Enterprise, where we turn the limit up.

I've also fixed a couple bugs in the fake feature flag store.

This adds an extended metrics format to the metrics ingested by Unleash

and sent by running SDKs in the wild. Notably, we don't store this

information anywhere new in this PR, this is just streamed out to

Victoria metrics - the point of this project is insight, not analysis.

Two things to look out for in this PR:

- I've chosen to take extend the registration event and also send that

when we receive metrics. This means that the new data is received on

startup and on heartbeat. This takes us in the direction of collapsing

these two calls into one at a later point

- I've wrapped the existing metrics events in some "type safety", it

ain't much because we have 0 type safety on the event emitter so this

also has some if checks that look funny in TS that actually check if the

data shape is correct. Existing tests that check this are more or less

preserved

This fixes the issue where project names that are 100 characters long

or longer would cause the project creation to fail. This is because

the resulting ID would be longer than the 100 character limit imposed

by the back end.

We solve this by capping the project ID to 90 characters, which leaves

us with 10 characters for the suffix, meaning you can have 1 billion

projects (999,999,999 + 1) that start with the same 90

characters (after slugification) before anything breaks.

It's a little shorter than what it strictly has to be (we could

probably get around with 95 characters), but at this point, you're

reaching into edge case territory anyway, and I'd rather have a little

too much wiggle room here.

This PR removes the last two flags related to the project managament

improvements project, making the new project creation form GA.

In doing so, we can also delete the old project creation form (or at

least the page, the form is still in use in the project settings).

This PR:

- adds a flag to anonymize user emails in the new project cards

- performs the anonymization using the existing `anonymise` function

that we have.

It does not anonymize the system user, nor does it anonymize groups. It

does, however, leave the gravatar url unchanged, as that is already

hashed (but we may want to hide that too).

This PR also does not affect the user's name or username. Considering

the target is the demo instance where the vast majority of users don't

have this (and if they do, they've chosen to set it themselves), this

seems an appropriate mitigation.

With the flag turned off:

With the flag on:

Fix project role assignment for users with `ADMIN` permission, even if

they don't have the Admin root role. This happens when e.g. users

inherit the `ADMIN` permission from a group root role, but are not

Admins themselves.

---------

Co-authored-by: Gastón Fournier <gaston@getunleash.io>

This PR adds metrics tracking for:

- "maxConstraintValues": the highest number of constraint values that

are in use

- "maxConstraintsPerStrategy": the highest number of constraints used on

a strategy

It updates the existing feature strategy read model that returns max

metrics for other strategy-related things.

It also moves one test into a more fitting describe block.

Instead of running exists on every row, we are joining the exists, which

runs the query only once.

This decreased load time on my huge dataset from 2000ms to 200ms.

Also added tests that values still come through as expected.

Instead of running exists on every row, we are joining the exists, which

runs the query only once.

This decreased load time on my huge dataset from 2000ms to 200ms.

Also added tests that values still come through as expected.

**Upgrade to React v18 for Unleash v6. Here's why I think it's a good

time to do it:**

- Command Bar project: We've begun work on the command bar project, and

there's a fantastic library we want to use. However, it requires React

v18 support.

- Straightforward Upgrade: I took a look at the upgrade guide

https://react.dev/blog/2022/03/08/react-18-upgrade-guide and it seems

fairly straightforward. In fact, I was able to get React v18 running

with minimal changes in just 10 minutes!

- Dropping IE Support: React v18 no longer supports Internet Explorer

(IE), which is no longer supported by Microsoft as of June 15, 2022.

Upgrading to v18 in v6 would be a good way to align with this change.

TS updates:

* FC children has to be explicit:

https://stackoverflow.com/questions/71788254/react-18-typescript-children-fc

* forcing version 18 types in resolutions:

https://sentry.io/answers/type-is-not-assignable-to-type-reactnode/

Test updates:

* fixing SWR issue that we have always had but it manifests more in new

React (https://github.com/vercel/swr/issues/2373)

---------

Co-authored-by: kwasniew <kwasniewski.mateusz@gmail.com>

This PR removes the flag for the new project card design, making it GA.

It also removes deprecated components and updates one reference (in the

groups card) to the new components instead.

## About the changes

Removes the deprecated state endpoint, state-service (despite the

service itself not having been marked as deprecated), and the file

import in server-impl. Leaves a TODO in place of where file import was

as traces for a replacement file import based on the new import/export

functionality

## About the changes

This aligns us with the requirement of having ip in all events. After

tackling the enterprise part we will be able to make the ip field

mandatory here:

2c66a4ace4/src/lib/types/events.ts (L362)

In preparation for v6, this PR removes usage and references to

`error.description` instead favoring `error.message` (as mentioned

#4380)

I found no references in the front end, so this might be (I believe it

to be) all the required changes.

This PR is part of #4380 - Remove legacy `/api/feature` endpoint.

## About the changes

### Frontend

- Removes the useFeatures hook

- Removes the part of StrategyView that displays features using this

strategy (not been working since v4.4)

- Removes 2 unused features entries from routes

### Backend

- Removes the /api/admin/features endpoint

- Moves a couple of non-feature related tests (auth etc) to use

/admin/projects endpoint instead

- Removes a test that was directly related to the removed endpoint

- Moves a couple of tests to the projects/features endpoint

- Reworks some tests to fetch features from projects features endpoint

and strategies from project strategies